The Euler Class and Poincaré Duality

My last few posts have been about zero sections of vector bundles, and how the homology class of a zero section is governed by a cohomology class associated with the vector bundle, known as the Euler class. I worked through some examples where we can compute the Euler class of a vector bundle using another mysterious cohomology class called the Thom class, but never explained where the Thom class comes from. In this post, I will motivate these constructions using intersection theory and Poincaré duality, where cohomology classes are used to determined how different subsets of a manifold may intersect. Proofs of the theorems discussed here can be found, e.g. in these notes by Michael Hutchings.

Zero sets as intersections

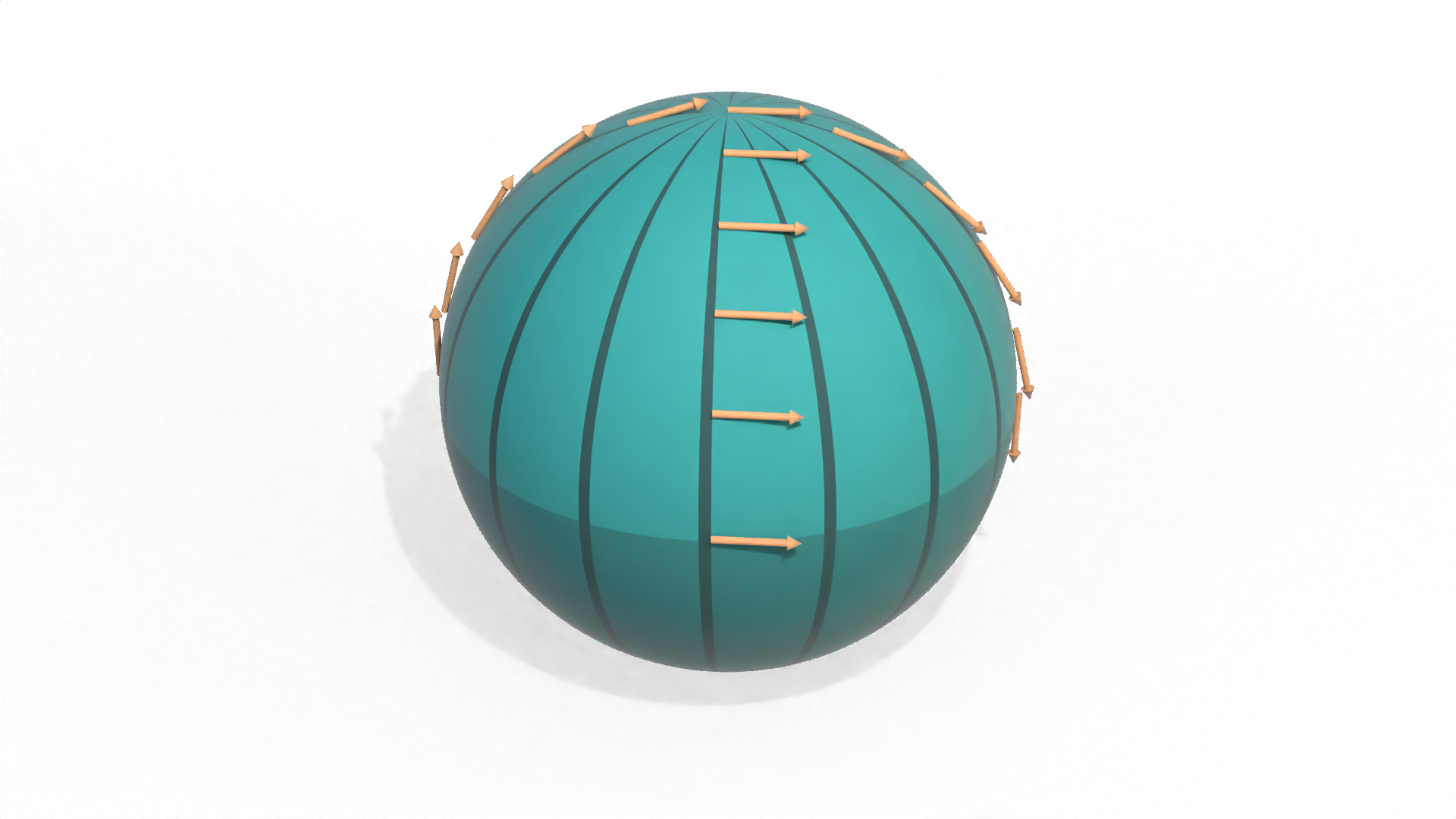

If we have a vector bundle \(\RR^d \to E \to B\) over an \(n\)-dimensional base space \(B\), then a section \(\sigma\) of \(E\) can be viewed as an \(n\)-dimensional submanifold of that \((n+d)\)-dimensional manifold \(E\), which is essentially the graph of \(\sigma\). Then the zero set of \(\sigma\) can be thought of as the intersection of this submanifold with the submanifold arising from the zero section of \(E\).

This intersection picture immediately explains one mysterious fact: why must the zero sets of all sections live in the same homology class in \(B\)? Well, the graphs of any two sections are homotopic to each other (since we can linearly interpolate between the sections in each fiber). And the homology class of the intersection of two submanifolds does not change when you apply a homotopy. So the zero set of any section always resides in some fixed homology class, determined by the vector bundle \(E\).

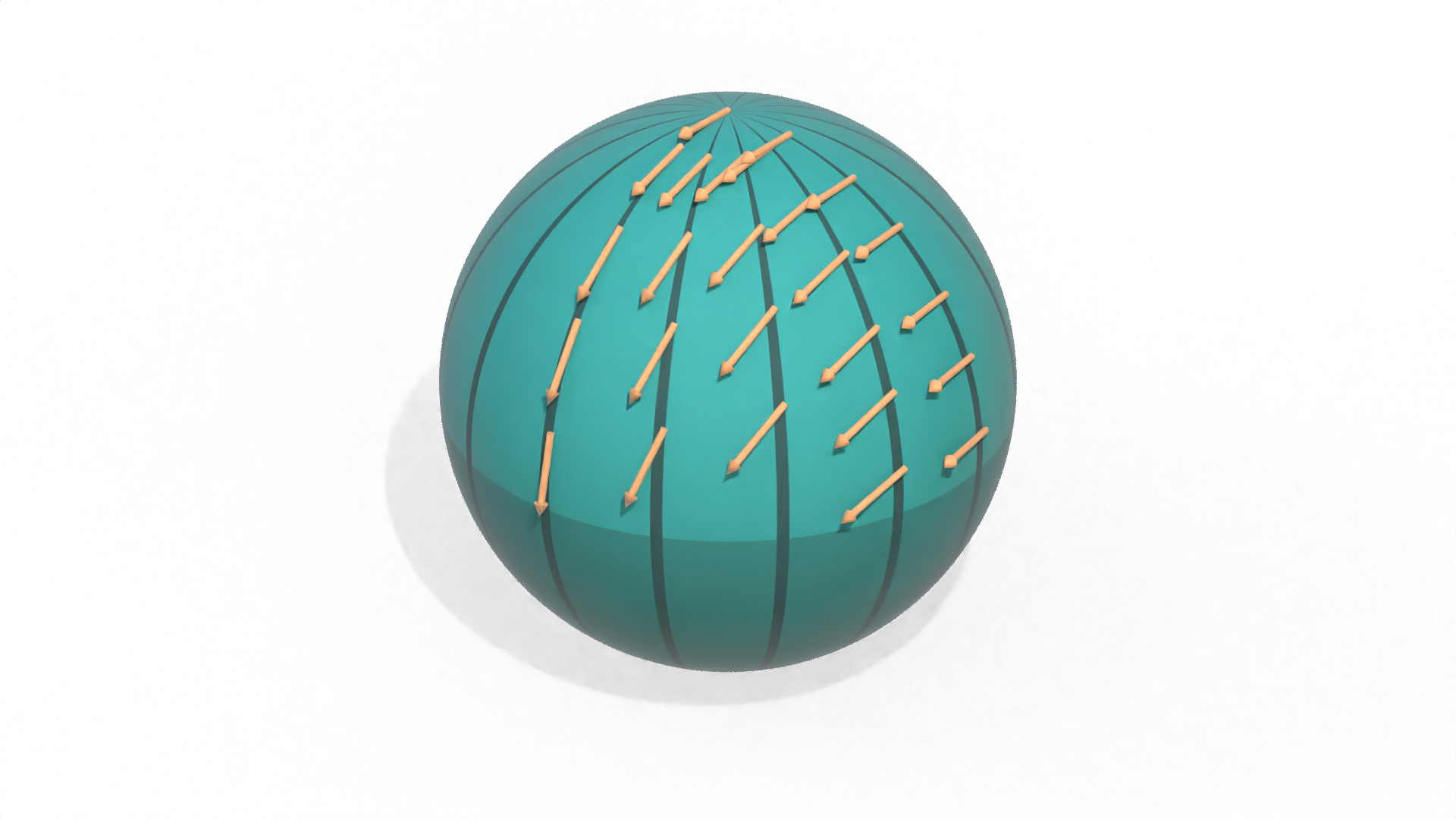

And if we do a little more work, we can figure out what that homology class must be. Under Poincaré duality, we can characterize intersections of submanifolds using products of their dual cohomology classes. In particular, suppose let \(M\) be a compact \(n\)-dimensional manifold without boundary, and let \(A\) and \(B\) be submanifolds of dimension \(i\) and \(j\) respectively. Then \(A\) and \(B\) are dual to cohomology classes \(\alpha \in H^{n-i}(M)\) and \(\beta \in H^{n-j}(M)\), where dual class \(\alpha\) is characterized by the property that for any \((n-i)\)-dimensional submanifold \(S\), the integral \(\int_S \alpha\) of \(\alpha\) along \(S\) should equal the number of signed intersections between \(S\) and \(A\) (and similarly for \(\beta\). The product of these cohomology classes \(\alpha \smile \beta \in H^{n - (i + j - n)}(M)\) is then dual to the \((i+j-n)\)-dimensional intersection \(A \cap B\). If \(M\) has a boundary \(\partial M\), then everything works out basically the same way as long as \(\alpha\) and \(\beta\) are taken to be relative cohomology classes in \(H^\bullet(M, \partial M)\).

So if we had a cohomology class \(\gamma \in H^d(E)\) which was Poincaré dual to the zero section of \(E\), then we could use it to find the homology class of the zero set of any other section. Furthermore, since all sections are homotopic to each other, this cohomology class will actually be dual to all sections of the vector bundle! And this special cohomology class is precisely the Thom class.

Finding the Thom class

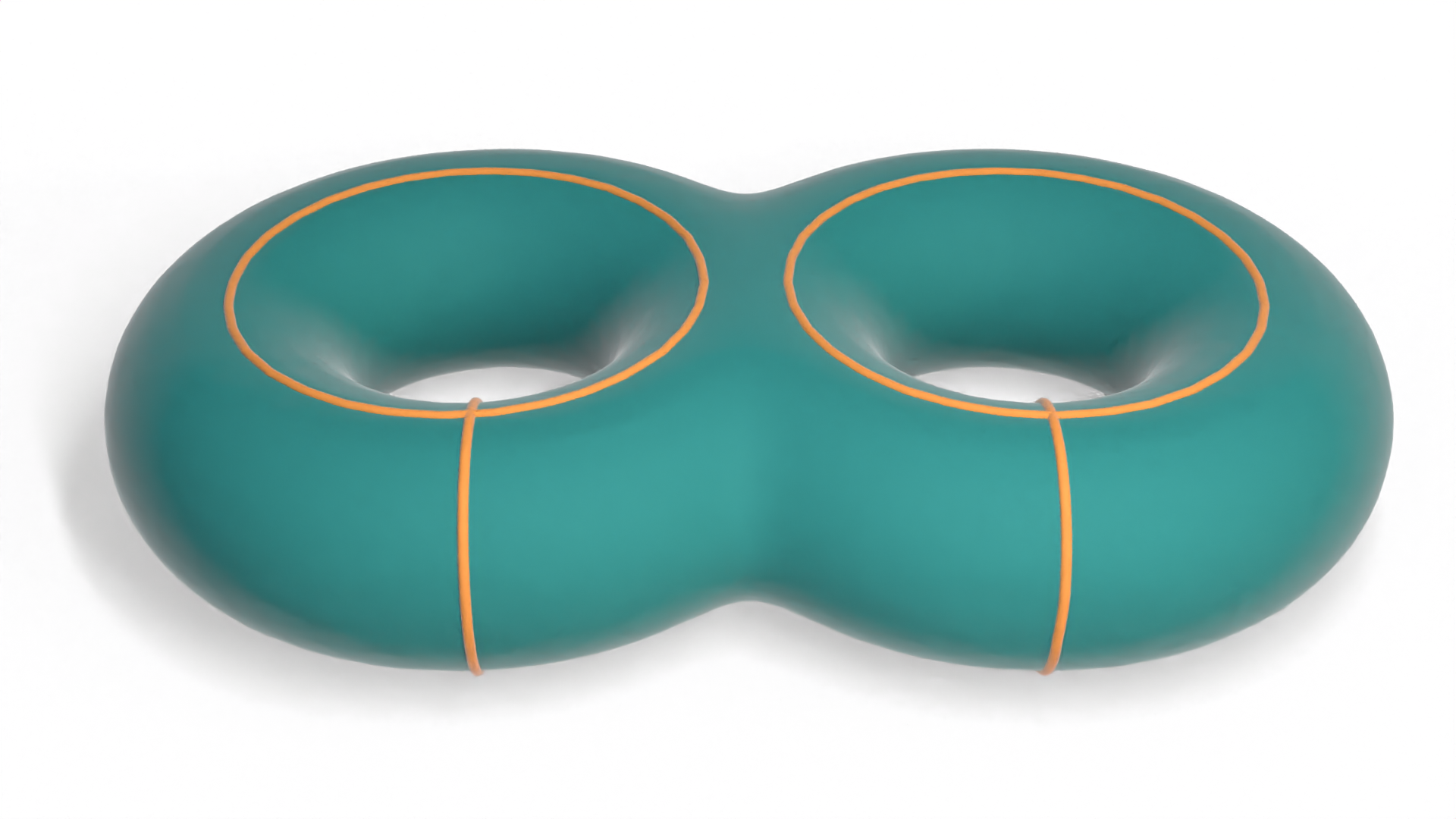

Now, one problem here is that our vector bundle \(E\) not compact, so the version of Poincaré duality which we've been considering here does not apply directly. But, there's a simple fix: rather than considering the entire vector bundle, we can instead build a disk bundle \(D(E)\) where we consider only a compact disk \(D^d_x \subset \RR^d_x\) from each fiber. So long as our base space \(B\) is compact, the graph of any section \(\sigma\) will be a compact subset of \(E\), so it will be contained in such a disk bundle. And of course, every disk bundle contains the zero section.

With that technical detail taken care of, we can look for a cohomology class in our disk bundle which is the Poincaré dual of the zero section. Of course, since our disk bundle is a manifold with boundary, this class should lie in the relative cohomology group \(H^d(D(E), \partial D(E))\). And the boundary of our disk bundle is precisely the sphere bundle \(S(E)\) over \(B\). So the Poincaré dual of the zero section should be a cohomology class \(c \in H^d(D(E), S(E))\). It turns out that this class is precisely the Thom class of our vector bundle.

The Thom class for an oriented vector bundle \(E\) is usually defined as a cohomology class \(c \in H^d(D(E), S(E))\) such that for any point in the base space \(x \in B\), the restriction of \(c\) to the fiber \((D^d_x, S^{d-1}_x)\) yields the generator of \(H^d(D^d_x, S^{d-1}_x)\) which agrees with the orientation of the fiber \(\RR^d_x\). Essentially, this means that if we evaluate \(c\) on a positively-oriented fiber, we should get 1. But this is exactly what the Poincaré dual gives on each fiber, since there is 1 intersection between each fiber and the zero section.

Restricting to the Euler class

So now we have a nice picture of the Thom class \(c \in H^d(D(E),S(E))\): it is the Poincaré dual of the zero section of the disk bundle \(D(E)\) (and hence also the dual to all other sections as well), and provides information about where the zeros of the graph of our section lie in \(D(E)\). And for a more direct description of the zeros, we can restrict \(c\) to the base space \(B\) (viewed as the zero section of \(E\)). This restriction yields the Euler class \(e \in H^d(B)\). For any \(d\)-dimensional submanifold \(S \subseteq B\), the value \(e(S)\) of the Euler class evaluated on \(S\) is equal to the value of the Thom class evaluated on \(S\), viewed as a submanifold of the disk bundle, which is precisely the number of intersections between \(S\) and any section of \(E\)!

The Thom isomorphism theorem

Finally, we can use Poincaré duality to understand the Thom isomorphism theorem. If we let \(p : D(E) \to B\) denote the projection from our disk bundle onto the base space \(B\), then the which states that the mapping Thom isomorphism theorem gives an isomorphism \[\begin{aligned} \Phi : H^k(B) &\to H^{k+d}(D(E), S(E))\\ x &\mapsto p^*(x) \smile c \end{aligned}\] (As always, \(d\) is the rank of our vector bundle \(E\), and \(c \in H^d(D(E), S(E))\) is the Thom class).

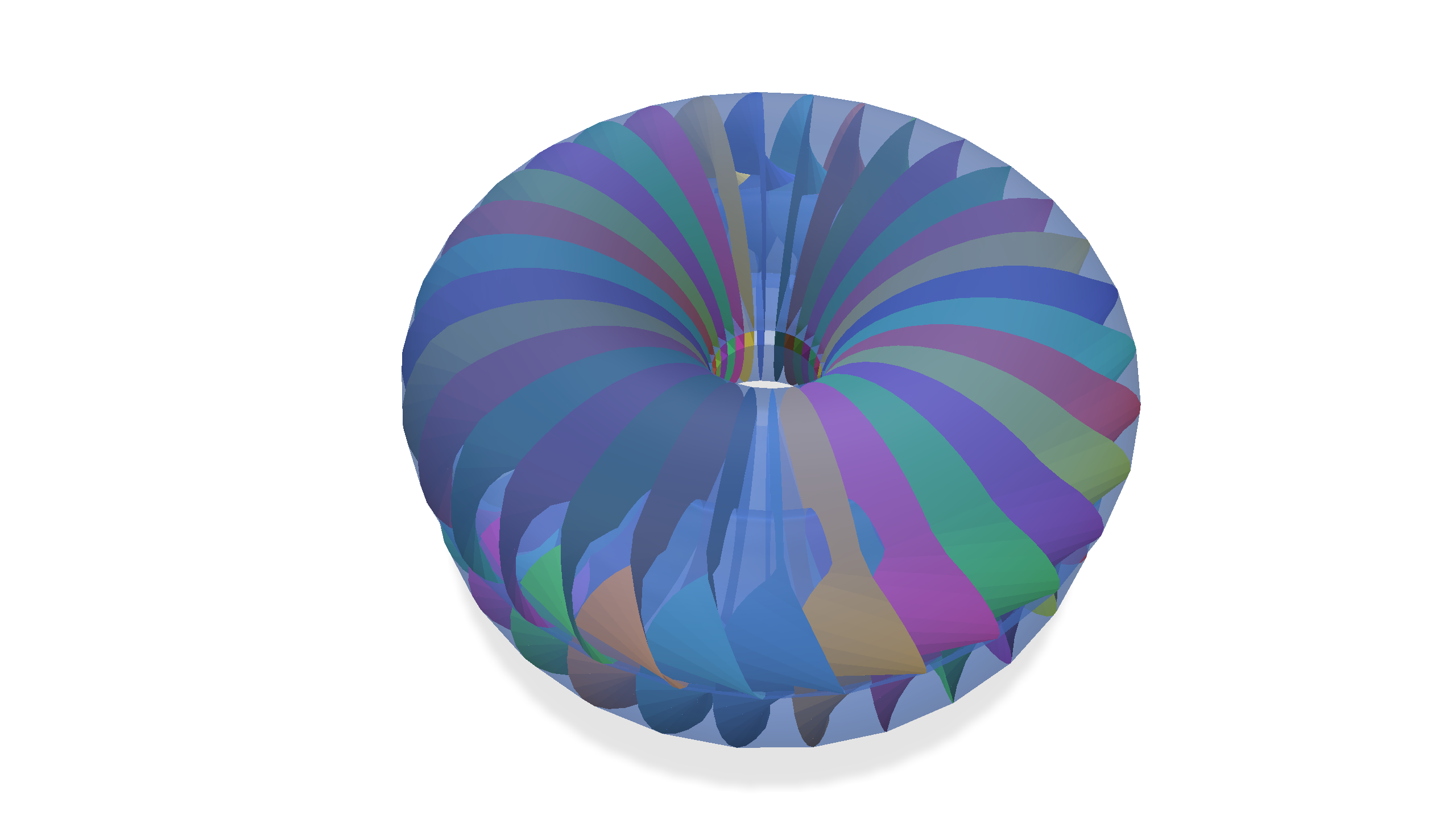

In de Rham cohomology, where our cohomology classes are represented by differential forms, you can view the inverse mapping as partial integration over the fibers of \(D(E)\): taking a \((k+d)\)-form on \(D(E)\) and integrating it over the \(d\)-dimensional fibers yeilds a \(k\)-dimensional form on the base space \(B\).

Abstractly, the fact that these cohomology groups are isomorphic is not hard to establish. By Poincaré duality, we have isomorphisms \(H^k(B) \cong H_{n-k}(B)\) and \(H^{k+d}(D(E),S(E)) \cong H_{n-k}(D(E))\), and these two homology groups are isomorphic because \(D(E)\) deformation retracts onto \(B\).

But why do all of the isomorphisms take this form using the Thom class? First, we can look at the case of \(H^0(B) \cong H_n(B)\). So long as \(B\) is connected and orientable, \(H^0(B)\) is generated by an element dual to the fundamental class \([B] \in H_n(B)\). If we want to map this generator into \(H^{d}(D(E), S(E))\) as outlined above, then we should take this fundamental class \([B]\), include it into the disk bundle \(D(E)\) as the zero section, and then take the Poincaré dual of the zero section. But this is precisely the Thom class \(c\)! Since this isomorphism maps the generator of \(H^0(B)\) to \(c\), it maps \(n \in \ZZ \cong H^0(B)\) to \(nc \in H^d(D(E), S(E))\), which can indeed be written as \(x \mapsto p^*(x) \smile c\).

How about the higher-dimensional cohomology groups? Given a cohomology class \(\eta \in H^k(B)\), we first look for an \((n-k)\)-dimensional submanifold \(\Sigma\) dual to \(\eta\). We then want to include \(\Sigma\) into \(D(E)\) using the zero section and take its dual. If we want to lift \(\Sigma\) up into \(D(E)\), we might try taking the pullback of its dual \(p^*(\eta)\). However, this will generally introduce unwanted contributions in different parts of the fibers of \(D(E)\). To ensure that this pullback only lifts \(\Sigma\) along the zero section, we can explicitly intersect the result with the zero section—which just means taking the product \(p^*(\eta) \smile c\).